AI verification

-

FailSafe: Multi-Agent Engine to Stop AI Hallucinations

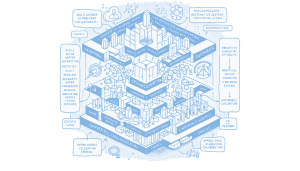

Read Full Article: FailSafe: Multi-Agent Engine to Stop AI Hallucinations A new verification engine called FailSafe has been developed to address the issues of "Snowball Hallucinations" and Sycophancy in Retrieval-Augmented Generation (RAG) systems. FailSafe employs a multi-layered approach, starting with a statistical heuristic firewall to filter out irrelevant inputs, followed by a decomposition layer using FastCoref and MiniLM to break down complex text into simpler claims. The core of the system is a debate among three agents: The Logician, The Skeptic, and The Researcher, each with distinct roles to ensure rigorous fact-checking and prevent premature consensus. This matters because it aims to enhance the reliability and accuracy of AI-generated information by preventing the propagation of misinformation.

A new verification engine called FailSafe has been developed to address the issues of "Snowball Hallucinations" and Sycophancy in Retrieval-Augmented Generation (RAG) systems. FailSafe employs a multi-layered approach, starting with a statistical heuristic firewall to filter out irrelevant inputs, followed by a decomposition layer using FastCoref and MiniLM to break down complex text into simpler claims. The core of the system is a debate among three agents: The Logician, The Skeptic, and The Researcher, each with distinct roles to ensure rigorous fact-checking and prevent premature consensus. This matters because it aims to enhance the reliability and accuracy of AI-generated information by preventing the propagation of misinformation.

-

Local LLMs and Extreme News: Reality vs Hoax

Read Full Article: Local LLMs and Extreme News: Reality vs Hoax The experience of using local language models (LLMs) to verify an extreme news event, such as the US attacking Venezuela and capturing its leaders, highlights the challenges faced by AI in distinguishing between reality and misinformation. Despite accessing credible sources like Reuters and the New York Times, the Qwen Research model initially classified the event as a hoax due to its perceived improbability. This situation underscores the limitations of smaller LLMs in processing real-time, extreme events and the importance of implementing rules like Evidence Authority and Hoax Classification to improve their reliability. Testing with larger models like GPT-OSS:120B showed improved skepticism and verification processes, indicating the potential for more accurate handling of breaking news in advanced systems. Why this matters: Understanding the limitations of AI in processing real-time events is crucial for improving their reliability and ensuring accurate information dissemination.

The experience of using local language models (LLMs) to verify an extreme news event, such as the US attacking Venezuela and capturing its leaders, highlights the challenges faced by AI in distinguishing between reality and misinformation. Despite accessing credible sources like Reuters and the New York Times, the Qwen Research model initially classified the event as a hoax due to its perceived improbability. This situation underscores the limitations of smaller LLMs in processing real-time, extreme events and the importance of implementing rules like Evidence Authority and Hoax Classification to improve their reliability. Testing with larger models like GPT-OSS:120B showed improved skepticism and verification processes, indicating the potential for more accurate handling of breaking news in advanced systems. Why this matters: Understanding the limitations of AI in processing real-time events is crucial for improving their reliability and ensuring accurate information dissemination.

-

ChatGPT’s Inconsistency on Charlie Kirk’s Status

Read Full Article: ChatGPT’s Inconsistency on Charlie Kirk’s Status An example highlights the limitations of large language models (LLMs) like ChatGPT, which initially dismissed a claim about Charlie Kirk's death as a conspiracy theory, then verified and acknowledged the claim before reverting to its original stance. This inconsistency underscores the gap between the perceived intelligence of LLMs and their actual reliability, as they can confidently provide contradictory information. The incident serves as a reminder that while LLMs often appear intelligent, they are not infallible and can make errors in information processing. Understanding the strengths and weaknesses of AI is crucial as reliance on such technology increases.

An example highlights the limitations of large language models (LLMs) like ChatGPT, which initially dismissed a claim about Charlie Kirk's death as a conspiracy theory, then verified and acknowledged the claim before reverting to its original stance. This inconsistency underscores the gap between the perceived intelligence of LLMs and their actual reliability, as they can confidently provide contradictory information. The incident serves as a reminder that while LLMs often appear intelligent, they are not infallible and can make errors in information processing. Understanding the strengths and weaknesses of AI is crucial as reliance on such technology increases.

-

Farmer Builds AI Engine with LLMs and Code Interpreter

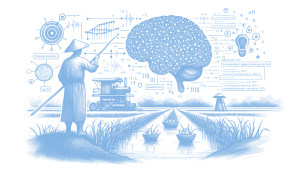

Read Full Article: Farmer Builds AI Engine with LLMs and Code Interpreter A Korean garlic farmer, who lacks formal coding skills, has developed a unique approach to building an "executing engine" using large language models (LLMs) and sandboxed code interpreters. By interacting with AI chat interfaces, the farmer structures ideas and runs them through a code interpreter to achieve executable results, emphasizing the importance of verifying real execution versus simulated outputs. This iterative process involves cross-checking results with multiple AIs to avoid hallucinations and ensure accuracy. Despite the challenges, the farmer finds value and insights in this experimental method, demonstrating how AI can empower individuals without technical expertise to engage in complex problem-solving and innovation. Why this matters: This highlights the potential of AI tools to democratize access to advanced technology, enabling individuals from diverse backgrounds to innovate and contribute to technical fields without traditional expertise.

A Korean garlic farmer, who lacks formal coding skills, has developed a unique approach to building an "executing engine" using large language models (LLMs) and sandboxed code interpreters. By interacting with AI chat interfaces, the farmer structures ideas and runs them through a code interpreter to achieve executable results, emphasizing the importance of verifying real execution versus simulated outputs. This iterative process involves cross-checking results with multiple AIs to avoid hallucinations and ensure accuracy. Despite the challenges, the farmer finds value and insights in this experimental method, demonstrating how AI can empower individuals without technical expertise to engage in complex problem-solving and innovation. Why this matters: This highlights the potential of AI tools to democratize access to advanced technology, enabling individuals from diverse backgrounds to innovate and contribute to technical fields without traditional expertise.