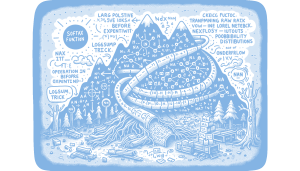

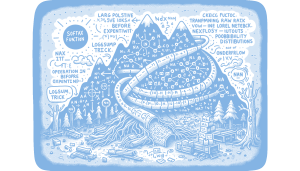

Softmax is a crucial activation function in deep learning for transforming neural network outputs into a probability distribution, allowing for interpretable predictions in multi-class classification tasks. However, a naive implementation of Softmax can lead to numerical instability due to exponential overflow and underflow, especially with extreme logit values, resulting in NaN values and infinite losses that disrupt training. To address this, a stable implementation involves shifting logits before exponentiation and using the LogSumExp trick to maintain numerical stability, preventing overflow and underflow issues. This approach ensures reliable gradient computations and successful backpropagation, highlighting the importance of understanding and implementing numerically stable methods in deep learning models. Why this matters: Ensuring numerical stability in Softmax implementations is critical for preventing training failures and maintaining the integrity of deep learning models.

Softmax is a crucial activation function in deep learning for transforming neural network outputs into a probability distribution, allowing for interpretable predictions in multi-class classification tasks. However, a naive implementation of Softmax can lead to numerical instability due to exponential overflow and underflow, especially with extreme logit values, resulting in NaN values and infinite losses that disrupt training. To address this, a stable implementation involves shifting logits before exponentiation and using the LogSumExp trick to maintain numerical stability, preventing overflow and underflow issues. This approach ensures reliable gradient computations and successful backpropagation, highlighting the importance of understanding and implementing numerically stable methods in deep learning models. Why this matters: Ensuring numerical stability in Softmax implementations is critical for preventing training failures and maintaining the integrity of deep learning models.

Softmax is a crucial activation function in deep learning for transforming neural network outputs into a probability distribution, allowing for interpretable predictions in multi-class classification tasks. However, a naive implementation of Softmax can lead to numerical instability due to exponential overflow and underflow, especially with extreme logit values, resulting in NaN values and infinite losses that disrupt training. To address this, a stable implementation involves shifting logits before exponentiation and using the LogSumExp trick to maintain numerical stability, preventing overflow and underflow issues. This approach ensures reliable gradient computations and successful backpropagation, highlighting the importance of understanding and implementing numerically stable methods in deep learning models. Why this matters: Ensuring numerical stability in Softmax implementations is critical for preventing training failures and maintaining the integrity of deep learning models.

Softmax is a crucial activation function in deep learning for transforming neural network outputs into a probability distribution, allowing for interpretable predictions in multi-class classification tasks. However, a naive implementation of Softmax can lead to numerical instability due to exponential overflow and underflow, especially with extreme logit values, resulting in NaN values and infinite losses that disrupt training. To address this, a stable implementation involves shifting logits before exponentiation and using the LogSumExp trick to maintain numerical stability, preventing overflow and underflow issues. This approach ensures reliable gradient computations and successful backpropagation, highlighting the importance of understanding and implementing numerically stable methods in deep learning models. Why this matters: Ensuring numerical stability in Softmax implementations is critical for preventing training failures and maintaining the integrity of deep learning models.