open source

-

TOPAS-DSPL: Dual-Stream Transformer for Reasoning

Read Full Article: TOPAS-DSPL: Dual-Stream Transformer for Reasoning![[P] TOPAS-DSPL: A 15M param Dual-Stream Recursive Transformer achieving 24% on ARC-2](https://www.tweakedgeek.com/wp-content/uploads/2025/12/featured-article-7476-300x171.png) TOPAS-DSPL is a neuro-symbolic model that utilizes a dual-stream recursive transformer architecture to enhance small-scale reasoning tasks. By employing a "Bicameral" latent space, it separates algorithmic planning from execution state, which reduces "Compositional Drift" compared to traditional monolithic models. With a parameter count of approximately 15 million, it achieves a 24% accuracy on the ARC-AGI-2 Evaluation Set, showing a significant improvement over standard Tiny Recursive Models. The model's architecture addresses the "forgetting" problem in recursive loops by decoupling rule generation from state updates, and the open-sourcing of its training pipeline allows for independent verification and further development. This matters as it demonstrates significant advancements in reasoning models, making them more accessible and effective for complex problem-solving tasks.

TOPAS-DSPL is a neuro-symbolic model that utilizes a dual-stream recursive transformer architecture to enhance small-scale reasoning tasks. By employing a "Bicameral" latent space, it separates algorithmic planning from execution state, which reduces "Compositional Drift" compared to traditional monolithic models. With a parameter count of approximately 15 million, it achieves a 24% accuracy on the ARC-AGI-2 Evaluation Set, showing a significant improvement over standard Tiny Recursive Models. The model's architecture addresses the "forgetting" problem in recursive loops by decoupling rule generation from state updates, and the open-sourcing of its training pipeline allows for independent verification and further development. This matters as it demonstrates significant advancements in reasoning models, making them more accessible and effective for complex problem-solving tasks.

-

15M Param Model Achieves 24% on ARC-AGI-2

Read Full Article: 15M Param Model Achieves 24% on ARC-AGI-2 Bitterbot AI has introduced TOPAS-DSPL, a compact recursive model with approximately 15 million parameters, achieving 24% accuracy on the ARC-AGI-2 evaluation set, a significant improvement over the previous state-of-the-art (SOTA) of 8% for models of similar size. The model employs a "Bicameral" architecture, dividing tasks into a Logic Stream for algorithm planning and a Canvas Stream for execution, effectively addressing compositional drift issues found in standard transformers. Additionally, Test-Time Training (TTT) is used to fine-tune the model on specific examples before solution generation. The entire pipeline, including data generation, training, and evaluation, has been open-sourced, allowing for community verification and potential reproduction of results on consumer hardware like the 4090 GPU. This matters because it demonstrates significant advancements in model efficiency and accuracy, making sophisticated AI more accessible and verifiable.

Bitterbot AI has introduced TOPAS-DSPL, a compact recursive model with approximately 15 million parameters, achieving 24% accuracy on the ARC-AGI-2 evaluation set, a significant improvement over the previous state-of-the-art (SOTA) of 8% for models of similar size. The model employs a "Bicameral" architecture, dividing tasks into a Logic Stream for algorithm planning and a Canvas Stream for execution, effectively addressing compositional drift issues found in standard transformers. Additionally, Test-Time Training (TTT) is used to fine-tune the model on specific examples before solution generation. The entire pipeline, including data generation, training, and evaluation, has been open-sourced, allowing for community verification and potential reproduction of results on consumer hardware like the 4090 GPU. This matters because it demonstrates significant advancements in model efficiency and accuracy, making sophisticated AI more accessible and verifiable.

-

Tencent HY-Motion 1.0: Text-to-Motion Model

Read Full Article: Tencent HY-Motion 1.0: Text-to-Motion Model Tencent HY-Motion 1.0 is an open-source, billion-parameter model that converts text into 3D character animations using the Diffusion Transformer (DiT) architecture and flow matching. This model enhances the capabilities of developers and creators by providing high-fidelity, fluid, and diverse animations that can be easily integrated into existing 3D animation workflows. It features a full-stage training strategy, including pre-training, supervised fine-tuning, and reinforcement learning, to ensure physical plausibility and semantic accuracy across over 200 motion categories. This advancement sets a new standard for instruction-following capability and motion quality in the industry. This matters because it significantly enhances the ability to create complex and realistic 3D animations from natural language, broadening the possibilities for content creation and innovation in digital media.

Tencent HY-Motion 1.0 is an open-source, billion-parameter model that converts text into 3D character animations using the Diffusion Transformer (DiT) architecture and flow matching. This model enhances the capabilities of developers and creators by providing high-fidelity, fluid, and diverse animations that can be easily integrated into existing 3D animation workflows. It features a full-stage training strategy, including pre-training, supervised fine-tuning, and reinforcement learning, to ensure physical plausibility and semantic accuracy across over 200 motion categories. This advancement sets a new standard for instruction-following capability and motion quality in the industry. This matters because it significantly enhances the ability to create complex and realistic 3D animations from natural language, broadening the possibilities for content creation and innovation in digital media.

-

Building Real-Time Interactive Digital Humans

Read Full Article: Building Real-Time Interactive Digital Humans Creating a real-time interactive digital human involves leveraging full-stack open-source technologies to simulate realistic human interactions. This process includes using advanced graphics, machine learning algorithms, and natural language processing to ensure the digital human can respond and interact in real-time. Open-source tools provide a cost-effective and flexible solution for developers, allowing for customization and continuous improvement. This matters because it democratizes access to advanced digital human technology, enabling more industries to integrate these interactive models into their applications.

Creating a real-time interactive digital human involves leveraging full-stack open-source technologies to simulate realistic human interactions. This process includes using advanced graphics, machine learning algorithms, and natural language processing to ensure the digital human can respond and interact in real-time. Open-source tools provide a cost-effective and flexible solution for developers, allowing for customization and continuous improvement. This matters because it democratizes access to advanced digital human technology, enabling more industries to integrate these interactive models into their applications.

-

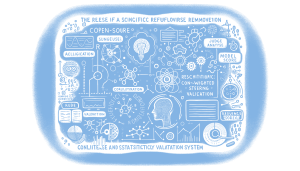

Open Source Code for Refusal Steering Paper Released

Read Full Article: Open Source Code for Refusal Steering Paper Released The release of an open-source code for the refusal steering paper introduces a method for surgical refusal removal using statistical validation rather than intuition-based steering. Key features include judge scores for validating training data, automatic selection of optimal layers through correlation analysis, and confidence-weighted steering vectors. The implementation also offers auto alpha optimization with early stopping and the ability to merge changes permanently into model weights. Although it requires a more complex setup than simpler steering repositories, it provides robust statistical validation at each step, enhancing reliability and precision in machine learning models. This matters because it advances the precision and reliability of machine learning model adjustments, reducing reliance on guesswork.

The release of an open-source code for the refusal steering paper introduces a method for surgical refusal removal using statistical validation rather than intuition-based steering. Key features include judge scores for validating training data, automatic selection of optimal layers through correlation analysis, and confidence-weighted steering vectors. The implementation also offers auto alpha optimization with early stopping and the ability to merge changes permanently into model weights. Although it requires a more complex setup than simpler steering repositories, it provides robust statistical validation at each step, enhancing reliability and precision in machine learning models. This matters because it advances the precision and reliability of machine learning model adjustments, reducing reliance on guesswork.

-

Streamlining AI Paper Discovery with Research Agent

Read Full Article: Streamlining AI Paper Discovery with Research Agent With the overwhelming number of AI research papers published annually, a new open-source pipeline called Research Agent aims to streamline the process of finding relevant work. The tool pulls recent arxiv papers from specific AI categories, filters them by semantic similarity to a research brief, classifies them into relevant categories, and ranks them based on influence signals. It also provides easy access to top-ranked papers with abstracts and plain English summaries. While the tool offers a promising solution to AI paper fatigue, it faces challenges such as potential inaccuracies in summaries due to LLM randomness and the non-stationary nature of influence prediction. Feedback is sought on improving ranking signals and identifying potential failure modes. This matters because it addresses the challenge of staying updated with significant AI research amidst an ever-growing volume of publications.

With the overwhelming number of AI research papers published annually, a new open-source pipeline called Research Agent aims to streamline the process of finding relevant work. The tool pulls recent arxiv papers from specific AI categories, filters them by semantic similarity to a research brief, classifies them into relevant categories, and ranks them based on influence signals. It also provides easy access to top-ranked papers with abstracts and plain English summaries. While the tool offers a promising solution to AI paper fatigue, it faces challenges such as potential inaccuracies in summaries due to LLM randomness and the non-stationary nature of influence prediction. Feedback is sought on improving ranking signals and identifying potential failure modes. This matters because it addresses the challenge of staying updated with significant AI research amidst an ever-growing volume of publications.

-

Plano-Orchestrator: Fast Open Source LLMs for Multi-Agent Systems

Read Full Article: Plano-Orchestrator: Fast Open Source LLMs for Multi-Agent Systems Plano-Orchestrator is a new family of open-source large language models (LLMs) designed for rapid multi-agent orchestration, developed by the Katanemo research team. These models prioritize privacy, speed, and performance, enabling them to efficiently determine which agents should handle user requests and in what order, acting as a supervisory agent in complex multi-agent systems. Suitable for various domains, including general chat, coding tasks, and extensive multi-turn conversations, Plano-Orchestrator is optimized for low-latency production environments. This innovation aims to enhance the real-world performance and efficiency of multi-agent systems, offering a valuable tool for developers focused on integrating diverse agent functionalities.

Plano-Orchestrator is a new family of open-source large language models (LLMs) designed for rapid multi-agent orchestration, developed by the Katanemo research team. These models prioritize privacy, speed, and performance, enabling them to efficiently determine which agents should handle user requests and in what order, acting as a supervisory agent in complex multi-agent systems. Suitable for various domains, including general chat, coding tasks, and extensive multi-turn conversations, Plano-Orchestrator is optimized for low-latency production environments. This innovation aims to enhance the real-world performance and efficiency of multi-agent systems, offering a valuable tool for developers focused on integrating diverse agent functionalities.

-

EntropyGuard: Local CLI for Data Deduplication

Read Full Article: EntropyGuard: Local CLI for Data Deduplication To reduce API costs and improve data processing efficiency, a new open-source CLI tool called EntropyGuard was developed for local data cleaning and deduplication. It addresses the issue of duplicate content in document chunks, which can inflate token usage and costs when using services like OpenAI. The tool employs two stages of deduplication: exact deduplication using xxHash and semantic deduplication with local embeddings and FAISS. This approach has demonstrated significant cost savings, reducing dataset sizes by approximately 40% and enhancing retrieval quality by eliminating redundant information. This matters because it offers a cost-effective solution for optimizing data handling without relying on expensive enterprise platforms or cloud services.

To reduce API costs and improve data processing efficiency, a new open-source CLI tool called EntropyGuard was developed for local data cleaning and deduplication. It addresses the issue of duplicate content in document chunks, which can inflate token usage and costs when using services like OpenAI. The tool employs two stages of deduplication: exact deduplication using xxHash and semantic deduplication with local embeddings and FAISS. This approach has demonstrated significant cost savings, reducing dataset sizes by approximately 40% and enhancing retrieval quality by eliminating redundant information. This matters because it offers a cost-effective solution for optimizing data handling without relying on expensive enterprise platforms or cloud services.

-

Free Interactive Course on Diffusion Models

Read Full Article: Free Interactive Course on Diffusion Models An interactive course has been developed to make understanding diffusion models more accessible, addressing the gap between overly simplistic explanations and those requiring advanced knowledge. This course includes seven modules and 90 challenges designed to engage users actively in learning, without needing a background in machine learning. It is free, open source, and encourages feedback to improve clarity and difficulty balance. This matters because it democratizes access to complex machine learning concepts, empowering more people to engage with and understand cutting-edge technology.

An interactive course has been developed to make understanding diffusion models more accessible, addressing the gap between overly simplistic explanations and those requiring advanced knowledge. This course includes seven modules and 90 challenges designed to engage users actively in learning, without needing a background in machine learning. It is free, open source, and encourages feedback to improve clarity and difficulty balance. This matters because it democratizes access to complex machine learning concepts, empowering more people to engage with and understand cutting-edge technology.

LLMPlot.com is a new platform designed to enhance the visual appeal of language model evaluation plots, which are often criticized for their lack of aesthetics. The tool is free and open source, allowing users to input model details, provider, and scores to generate visually appealing comparison plots. These plots are optimized for sharing on social media platforms like X, LinkedIn, and Reddit, making them accessible and engaging for a wider audience. This matters because it improves the communication and understanding of complex data through better visual representation.

LLMPlot.com is a new platform designed to enhance the visual appeal of language model evaluation plots, which are often criticized for their lack of aesthetics. The tool is free and open source, allowing users to input model details, provider, and scores to generate visually appealing comparison plots. These plots are optimized for sharing on social media platforms like X, LinkedIn, and Reddit, making them accessible and engaging for a wider audience. This matters because it improves the communication and understanding of complex data through better visual representation.