RAG systems

-

2026 Roadmap for AI Search & RAG Systems

Read Full Article: 2026 Roadmap for AI Search & RAG Systems A practical roadmap for modern AI search and Retrieval-Augmented Generation (RAG) systems emphasizes the need for robust, real-world applications beyond basic vector databases and prompts. Key components include semantic and hybrid retrieval methods, explicit reranking layers, and advanced query understanding and intent recognition. The roadmap also highlights the importance of agentic RAG, which involves query decomposition and multi-hop processing, as well as maintaining data freshness and lifecycle management. Additionally, it addresses grounding and hallucination control, evaluation criteria beyond superficial correctness, and production concerns such as latency, cost, and access control. This roadmap is designed to be language-agnostic and focuses on system design rather than specific frameworks. Understanding these elements is crucial for developing effective and efficient AI search systems that meet real-world demands.

A practical roadmap for modern AI search and Retrieval-Augmented Generation (RAG) systems emphasizes the need for robust, real-world applications beyond basic vector databases and prompts. Key components include semantic and hybrid retrieval methods, explicit reranking layers, and advanced query understanding and intent recognition. The roadmap also highlights the importance of agentic RAG, which involves query decomposition and multi-hop processing, as well as maintaining data freshness and lifecycle management. Additionally, it addresses grounding and hallucination control, evaluation criteria beyond superficial correctness, and production concerns such as latency, cost, and access control. This roadmap is designed to be language-agnostic and focuses on system design rather than specific frameworks. Understanding these elements is crucial for developing effective and efficient AI search systems that meet real-world demands.

-

Improving RAG Systems with Semantic Firewalls

Read Full Article: Improving RAG Systems with Semantic Firewalls In the GenAI space, the common approach to building Retrieval-Augmented Generation (RAG) systems involves embedding data, performing a semantic search, and stuffing the context window with top results. This approach often leads to confusion as it fills the model with technically relevant but contextually useless data. A new method called "Scale by Subtraction" proposes using a deterministic Multidimensional Knowledge Graph to filter out noise before the language model processes the data, significantly reducing noise and hallucination risk. By focusing on critical and actionable items, this method enhances the model's efficiency and accuracy, offering a more streamlined approach to RAG systems. This matters because it addresses the inefficiencies in current RAG systems, improving the accuracy and reliability of AI-generated responses.

In the GenAI space, the common approach to building Retrieval-Augmented Generation (RAG) systems involves embedding data, performing a semantic search, and stuffing the context window with top results. This approach often leads to confusion as it fills the model with technically relevant but contextually useless data. A new method called "Scale by Subtraction" proposes using a deterministic Multidimensional Knowledge Graph to filter out noise before the language model processes the data, significantly reducing noise and hallucination risk. By focusing on critical and actionable items, this method enhances the model's efficiency and accuracy, offering a more streamlined approach to RAG systems. This matters because it addresses the inefficiencies in current RAG systems, improving the accuracy and reliability of AI-generated responses.

-

Semantic Caching for AI and LLMs

Read Full Article: Semantic Caching for AI and LLMs Semantic caching is a technique used to enhance the efficiency of AI, large language models (LLMs), and retrieval-augmented generation (RAG) systems by storing and reusing previously computed results. Unlike traditional caching, which relies on exact matching of queries, semantic caching leverages the meaning and context of queries, enabling systems to handle similar or related queries more effectively. This approach reduces computational overhead and improves response times, making it particularly valuable in environments where quick access to information is crucial. Understanding semantic caching is essential for optimizing the performance of AI systems and ensuring they can scale to meet increasing demands.

Semantic caching is a technique used to enhance the efficiency of AI, large language models (LLMs), and retrieval-augmented generation (RAG) systems by storing and reusing previously computed results. Unlike traditional caching, which relies on exact matching of queries, semantic caching leverages the meaning and context of queries, enabling systems to handle similar or related queries more effectively. This approach reduces computational overhead and improves response times, making it particularly valuable in environments where quick access to information is crucial. Understanding semantic caching is essential for optimizing the performance of AI systems and ensuring they can scale to meet increasing demands.

-

Advancements in Llama AI and Local LLMs

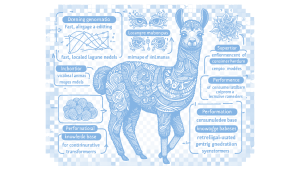

Read Full Article: Advancements in Llama AI and Local LLMs Advancements in Llama AI technology and local Large Language Models (LLMs) have been notable in 2025, with llama.cpp emerging as a preferred choice due to its superior performance and integration capabilities. Mixture of Experts (MoE) models are gaining traction for their efficiency in running large models on consumer hardware. New powerful local LLMs are enhancing performance across various tasks, while models with vision capabilities are expanding the scope of applications. Although continuous retraining of LLMs is difficult, Retrieval-Augmented Generation (RAG) systems are being used to mimic this process. Additionally, investments in high-VRAM hardware are facilitating the use of more complex models on consumer machines. This matters because these advancements are making sophisticated AI technologies more accessible and versatile for everyday use.

Advancements in Llama AI technology and local Large Language Models (LLMs) have been notable in 2025, with llama.cpp emerging as a preferred choice due to its superior performance and integration capabilities. Mixture of Experts (MoE) models are gaining traction for their efficiency in running large models on consumer hardware. New powerful local LLMs are enhancing performance across various tasks, while models with vision capabilities are expanding the scope of applications. Although continuous retraining of LLMs is difficult, Retrieval-Augmented Generation (RAG) systems are being used to mimic this process. Additionally, investments in high-VRAM hardware are facilitating the use of more complex models on consumer machines. This matters because these advancements are making sophisticated AI technologies more accessible and versatile for everyday use.

-

Advancements in Llama AI and Local LLMs in 2025

Read Full Article: Advancements in Llama AI and Local LLMs in 2025 In 2025, advancements in Llama AI technology and the local Large Language Model (LLM) landscape have been notable, with llama.cpp emerging as a preferred choice due to its superior performance and integration with Llama models. The popularity of Mixture of Experts (MoE) models is on the rise, as they efficiently run large models on consumer hardware, balancing performance with resource usage. New local LLMs are making significant strides, especially those with vision and multimodal capabilities, enhancing application versatility. Additionally, Retrieval-Augmented Generation (RAG) systems are being employed to simulate continuous learning, while investments in high-VRAM hardware are allowing for more complex models on consumer machines. This matters because it highlights the rapid evolution and accessibility of AI technologies, impacting various sectors and everyday applications.

In 2025, advancements in Llama AI technology and the local Large Language Model (LLM) landscape have been notable, with llama.cpp emerging as a preferred choice due to its superior performance and integration with Llama models. The popularity of Mixture of Experts (MoE) models is on the rise, as they efficiently run large models on consumer hardware, balancing performance with resource usage. New local LLMs are making significant strides, especially those with vision and multimodal capabilities, enhancing application versatility. Additionally, Retrieval-Augmented Generation (RAG) systems are being employed to simulate continuous learning, while investments in high-VRAM hardware are allowing for more complex models on consumer machines. This matters because it highlights the rapid evolution and accessibility of AI technologies, impacting various sectors and everyday applications.

-

Advancements in Local LLMs and MoE Models

Read Full Article: Advancements in Local LLMs and MoE Models Significant advancements in the local Large Language Model (LLM) landscape have emerged in 2025, with notable developments such as the dominance of llama.cpp due to its superior performance and integration with Llama models. The rise of Mixture of Experts (MoE) models has allowed for efficient running of large models on consumer hardware, balancing performance and resource usage. New local LLMs with enhanced vision and multimodal capabilities are expanding the range of applications, while Retrieval-Augmented Generation (RAG) is being used to simulate continuous learning by integrating external knowledge bases. Additionally, investments in high-VRAM hardware are enabling the use of larger and more complex models on consumer-grade machines. This matters as it highlights the rapid evolution of AI technology and its increasing accessibility to a broader range of users and applications.

Significant advancements in the local Large Language Model (LLM) landscape have emerged in 2025, with notable developments such as the dominance of llama.cpp due to its superior performance and integration with Llama models. The rise of Mixture of Experts (MoE) models has allowed for efficient running of large models on consumer hardware, balancing performance and resource usage. New local LLMs with enhanced vision and multimodal capabilities are expanding the range of applications, while Retrieval-Augmented Generation (RAG) is being used to simulate continuous learning by integrating external knowledge bases. Additionally, investments in high-VRAM hardware are enabling the use of larger and more complex models on consumer-grade machines. This matters as it highlights the rapid evolution of AI technology and its increasing accessibility to a broader range of users and applications.

-

Autoscaling RAG Components on Kubernetes

Read Full Article: Autoscaling RAG Components on KubernetesRetrieval-augmented generation (RAG) systems enhance the accuracy of AI agents by using a knowledge base to provide context to large language models (LLMs). The NVIDIA RAG Blueprint facilitates RAG deployment in enterprise settings, offering modular components for ingestion, vectorization, retrieval, and generation, along with options for metadata filtering and multimodal embedding. RAG workloads can be unpredictable, requiring autoscaling to manage resource allocation efficiently during peak and off-peak times. By leveraging Kubernetes Horizontal Pod Autoscaling (HPA), organizations can autoscale NVIDIA NIM microservices like Nemotron LLM, Rerank, and Embed based on custom metrics, ensuring performance meets service level agreements (SLAs) even during demand surges. Understanding and implementing autoscaling in RAG systems is crucial for maintaining efficient resource use and optimal service performance.